If you’re in my business of looking at logfiles to be able to determine what’s going on or what has happened one of the most annoying, and frightening, things to see is a sheer amount of failed login attempts. In most cases these are simply genuine mistakes where a lingering management application or forgotten script is still trying to login to obtain one piece of information or another out of the switch/fabric. The SAN switches are often well inside the datacentre management firewalls so attacks from outside the company are less likely to occur however when looking at security statistics over the last decade or so it turns out that threats are more likely to originate from inside the company boundaries. Employees mucking around with tools like nmap, MITD software like cane & able, or even an entire Kali Linux distro hooked up to the network “just to see what it does because a mate of mine recommended it”. In 99.999% of all install bases I looked at the normal embedded username/password mechanism is used for authentication and authorisation. This also means that if security management is not configured on these switches, a not so good-Samaritan is able to use significant brute force tactics to try and obtain access to these switches without anyone knowing. When using an external authentication mechanism like LDAP or TACACS+ chances are there are some monitoring procedures in place which monitor and alert on these kind of symptoms however the main issue is that the attack has already occurred and there is no mechanism to prevent these sorts of attacks on a level that really protects the switch. It is fairly simple to overload a switch with authentication attempts by firing off thousands of ssh,telnet and http(s) sessions (which is easily done from any reasonable priced laptop these days with a Linux distro like Kali installed) and therefore crippling the poor e500 CPU on the CP. This can have significant ramifications on overall fabric services in that switch which can bring down a storage network. Now, obviously there is a mechanism to try and prevent this via iptables however there are a number of back-draws.

IPtables

First of all, it is almost never configured and only the bare defaults are active (see the FOS manual). Even when it is configured to allow or block certain IP address ranges an ports there is in many cases a sheer lack of maintenance which simply outdates these rule-sets very quickly. Only very few storage environments have a well-maintained SAN. If I would have to put a number on it would be below the 5% mark so the use and maintenance of IP security rules on these switches is almost negligible. Brocade have improved the management capability of the IP tables rules base to be able to distribute it with a single command in a fabric, somewhat equivalent to Cisco’s CFS methodology, but the effectiveness of the security implementation stands or falls with the updates that come with a dynamic IP network.

Fail 2 Ban

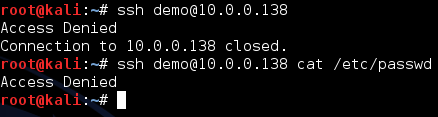

In order to increase the security level in FOS I lodged an RFE to incorporate a tool called fail2ban. (See here) The concept is to monitor logfiles for authentication failures and perform actions on a IP level by adding, adjusting or removing rules into the IPtables ruleset. So if an attacker (or creative employee) is firing of login attempts on the processes available in FOS (webtools, ssh, Telnet, API etc) the tool can create a rule in IPtables automatically which denies the source IP address access to the specified port and service on that switch. Based on the configuration of F2B this can be adjusted to just a protocol (TCP/UDP), a port (like 22 for ssh) and/or and ipaddress or address-segment (ie an entire subnet). You can get pretty creative with it however for the most basic (and mostly default) task it will block an IP address for a certain amount of time. The major benefit of this is that you not only prevent attackers from doing a brute force attack in order to crack a username/password combination and do some malicious things but it also ensures that not DOS (Denial of Service) attack can be done.

Wrapping logfiles

When checking logs for any kind of configuration problem it is very beneficial to have a trace back to see what has been done. This is done by either a CLI history log or another sort of logging mechanism. Even if an attacker has been able to access the system (which is pretty easy when you think that many switches are still sitting around in production environments with their default usernames and passwords) it is also very easy for him to remove traces by either deleting the history logs or just by simply kicking of a script with numerous password attempts which will pretty soon wrap the cli history and all back-traces have been removed.

The Bad News

Despite all benefits I mentioned above Brocade engineering has decided not to implement the security mechanism as they feel that by properly configuring the current available IPtables rules-set you can prevent most of the things I mentioned above. Their standpoint is that is the responsibility of the administrator to correctly configure the switch in such a way access is only granted to eligible people and the network security mechanisms of firewalls and IPtables rule-sets prevent these attacks.

In a way I agree but I live in the real world where I’m on the bottom end of the problem funnel and as such I see the problems I mentioned on a day to day basis. The supportguys from the different OEMs resolve around 99.99% of these “configuration” issues and as such Brocade never sees them and therefore isn’t marked as a problem.

The Poll

I would like to know if the mechanism with fail2ban (or a similar tool) would benefit you. Let me know by filling in the poll below.

[socialpoll id=”2249307″]

however, it should be turned off by default. but if it’s there, you can always start using it!