The storage world has always been predictable (from a technical side that is. :-)) This means that data coming from an application through server and traversing a multitude of connected devices to a spindle (in whatever form or shape) takes the logically shortest, fasted and best available route. These routes are calculated based on a protocol called FSPF. (Fabric Shortest Path First) and is somewhat analogous to OSPF in the networking world.

Both OSPF and FSPF are based on Dijkstra’s algorithm of mathematically calculating the shortest path between any two given points. When you fire up Google maps and use the “Directions” option to get a route from A to B the same algorithm is used. Obviously you can adjust the paths between those two points to either include or exclude certain criteria like distance, speed etc. on which you let loose the Dijkstra calculations. To go back to storage the results of the calculations in addition to secondary criteria (zoning, route-costs etc.) determines the routes that are programmed into the ASICs or cross-bar switches. So what does this have to do with load-sharing and delivery ordering??

In the communication path between the application and the spindle the data-blocks are likely split into smaller fragments. For instance and Oracle database IO is, in general, 8KB in size. A fibre-channel frame however is only 2KB which means you have 4 data-frames in one or more FC sequences. Now these frames are related, obviously, and they need to be re-assembled at the target side in such a way it can be either stored as it was sent by the application or, on the reverse side, processed by the application as it was retrieved from the medium (disk,tape,whatever). It is thus essential that packets are received in order. Fibre-channel uses normally two routing policies where routing tables are build on the above mentioned FSPF algorithm based on source-id to destination-id or source-id, destination-id and originator exchange-id (so called SID,DID and OXID). The first one is fairly static and doesn’t allow much flexibility w.r.t. loadbalancing on multiple, same-cost, routes.

Sideline—– Huhh, what are same-cost routes:

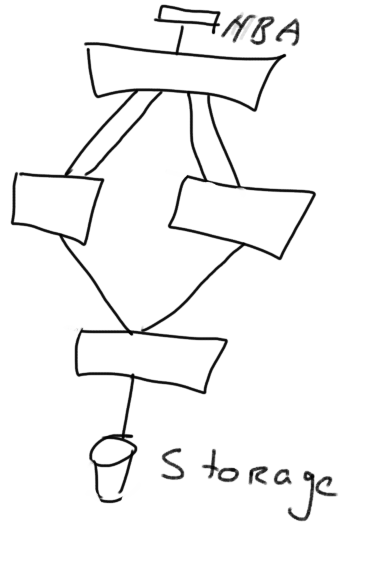

A route as programmed by the switches has a certain cost per path. If there are multiple paths between source and destination with each the same characteristics they have the same cost. Frames are always routed based upon the lowest cost. If you check the below image you’ll see what I mean.

You see that there are an equal amount of links with the same characteristics between the HBA and storage.

You see that there are an equal amount of links with the same characteristics between the HBA and storage.

This means that the routing algorithm can be fine-tuned to even balance the frames across all available links. If the SID/DID (sometime also called port-based routing) method is used once a route is programmed in the switches ALL frames between the source and destination will ALWAYS use that particular link until a change in the fabric happens. This also means that you most likely will see an unevenly balanced fabric because one host can be more busy that the other but since the routes are fixed they are unable to traverse other, less-utilised, paths. If the routing method also includes the OXID (also called Exchange Based Routing) the fabric can disperse the frames over multiple physical links. The same-cost rule still applies though.

In a Brocade environment you either pick PBR or XBR. For Ficon PBR is a requirement and IOD turned on is a compulsory rule. Mainframe is far more strict in fabric configurations and basically doesn’t allow dynamic reconfigurations of a fabric.

When XBR is used (and that is in 99.9999% of all Open Systems environments) and a change happens (ie an addition of an HBA) in a fabric all existing configurations are preserved however the routing tables are rebuild from scratch. If IOD is enabled all existing tables are deleted and the IOD timer delays actions by the routing logic for 500ms. As soon as that timer has expired the routing tables are rebuild and enabled. This causes frame that are underway from SID to DID will be dropped at some point in time because there is just no route available. You’ll often see this counter er_unroutable increasing if a frame is affected by this scenario. It also means that during this 500ms timing window no frames can be sent in either way. The IOD logic makes sure that frames will be delivered in order via this way.

In an XBR policy DLS is enabled by default and as such, upon a fabric change, routing tables are adjusted to evenly balance the number of paths between all initiators and targets. All initiators can use each designated path with every new exchange. Still, with every change in the fabric a re-routing calculation will take place which can potentially cause frames to drop. Normally this is no problem since the upper layer protocols like SCSI and IP are very capable of recovering from this however in many scenarios even these short burst of timeouts cause a problem on the filesystem and/or application layer. In order to overcome that Brocade added some functionality to the DLS re-routing logix called “lossless” (how creative :-)). If you enable DLS with the “–lossless” parameter the logic makes sure that when the routing tables need to be adjusted, the switch-ports will withhold the R_RDY primitives hence making sure that the attached device cannot send new frames after the amount of available credits for that device have been exhausted. As soon that is done the new routing tables is calculated and enabled after which the R_RDY primitives are sent from the switch-port to the adjacent device.

Be aware however that removal of links in a physical method (ie pulling the cable) or by disabling a port (portdisable X) always will cause frames to drop especially on E-ports. If you need to remove an E-port where multiple exist between two switches use the “portdecom” command first. This way the routing tables are adjusted in a similar way as with “DLS –lossless” so new frames entering the switch destined for a domain on the remote side of the ISL, will be re-routed on the remaining E-port(s)

I’m aware this is a complex subject and as such it’s pretty difficult to explain. If I was unclear in any of the above please let me know so I can adjust the wording or provide additional info.

Cheers,

Erwin

Hello Siddarth,

Thank you for your comment.